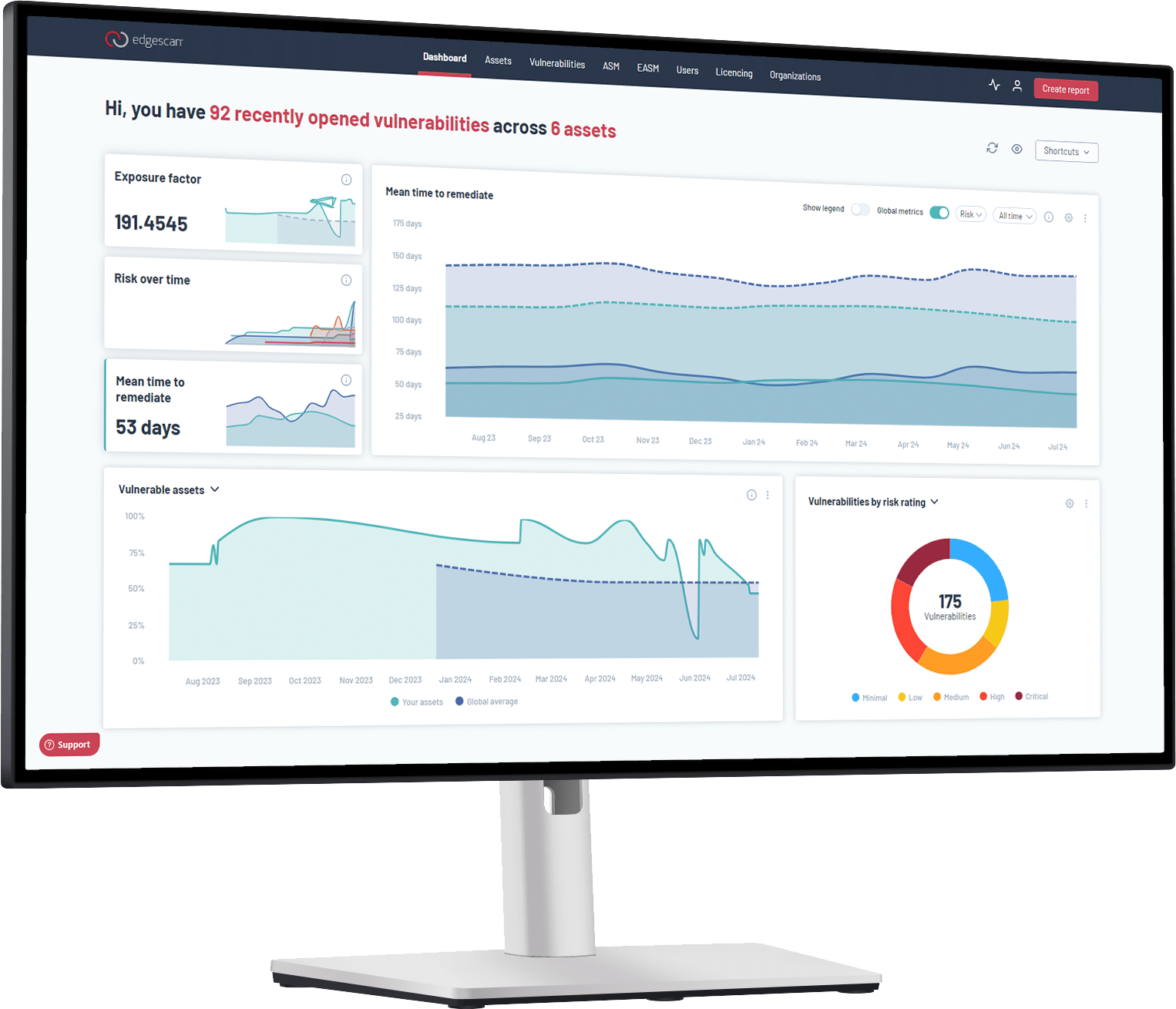

Recently Edgescan deployed two tools to help with risk prioritization, namely, EPSS and CISA KEV mapping. Both can be combined with CVSS and EVSS (Edgescan Validated Security Score) to help prioritize vulnerabilities across our client’s estates. As always, we are working on a few more tools to help with prioritization and we will formally announce them as they roll out. Another model I would like to introduce for consideration is the SSVC.

What is SSVC?

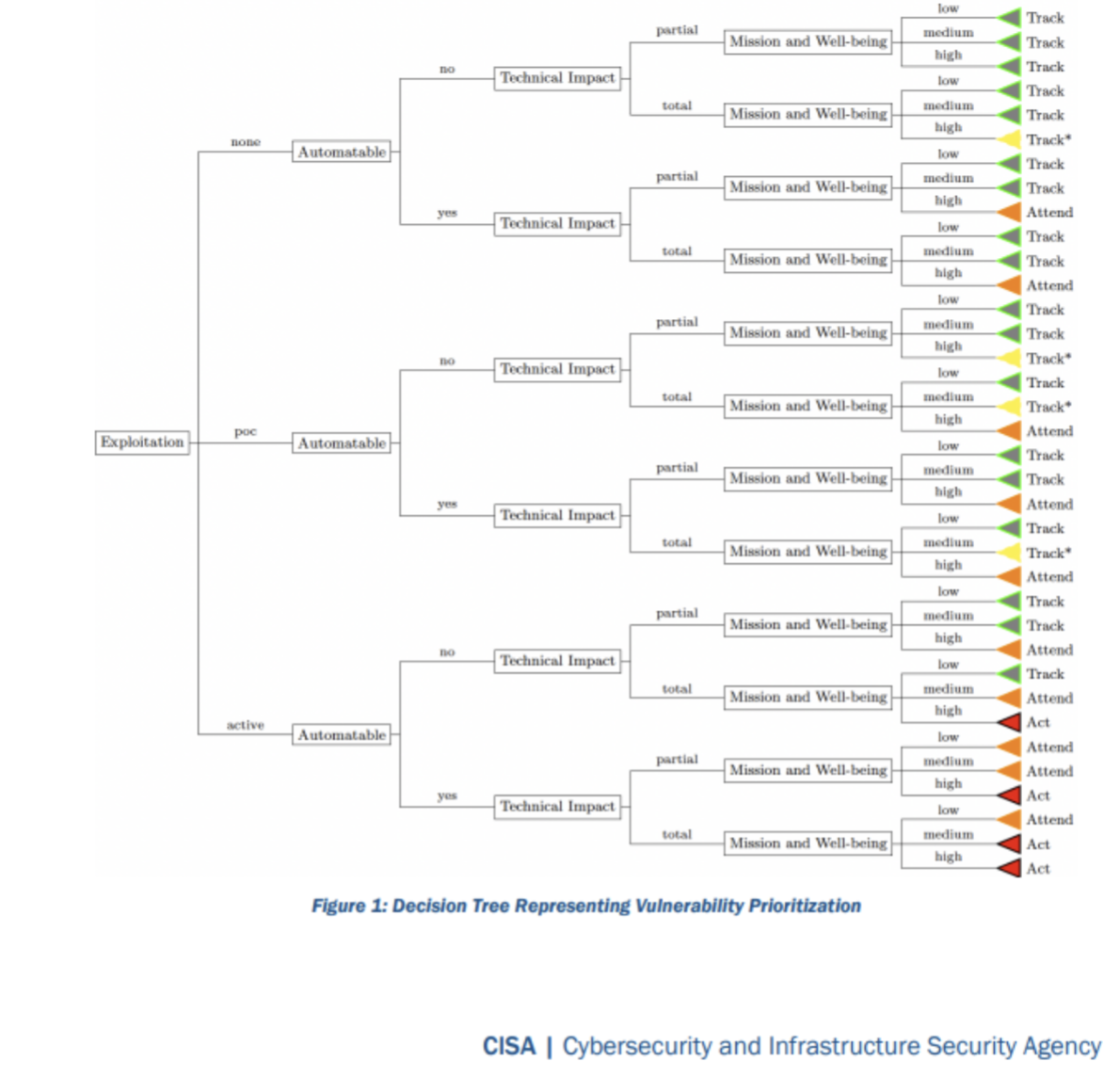

Another model used to prioritize vulnerabilities is the CISA SSVC (Stakeholder-Specific Vulnerability Categorization). SSVC is a customized decision tree model that assists in prioritizing vulnerability response, and it is currently being used by the United States government and their Agencies. The goal of SSVC is to assist in prioritizing the remediation of a vulnerability based on the impact successful exploitation would have. Check out the SSVC guidelines.

I find that the SSVC model looks very promising.

The SSVC model is based on a number of environmental attributes associated with the discovered vulnerability as follows:

(State of) Exploitation: Evidence of active exploitation of a vulnerability Does a publicly available proof of concept (PoC) exist, is it actively being exploited? If no PoC exists is there reliable evidence it is being exploited?

Technical Impact: Technical Impact of exploiting the vulnerability

Similar to severity on CVSS and is split into “partial” or “total” impact. “Total” means the impact will provide the attacker total control of the component being attacked.

Automatable: Can an attacker rapidly cover an organizations estate and commit widespread attacks

Can the exploit be automated? This obviously beings speed and scale into account. Its considered not automatable if Steps 1-4 of the kill chain—reconnaissance, weaponization, delivery, and exploitation—cannot be reliably automated for this vulnerability. This also has a contextual aspect in terms of vulnerability chaining (combining vulnerabilities) and the context in where the vulnerability is present. Every system is different and there may be compensating controls (e.g. multifactor authentication) which would prevent automation of the exploit.

Mission Prevalence: Impact on mission essential functions of relevant entities

In CISA terms; “A mission essential function (MEF) is a function “directly related to accomplishing the organization’s mission as set forth in its statutory or executive charter.” To me this means if this system was compromised would is affect my organisations “mission”? Could by business still operate? Will it adversely negatively affect my business if a given system was taken over? This is highly contextual and is related to DR (Disaster Recovery) and BCP (Business Continuity Planning) plans determining the importance of a system to an organization.

Public Well-Being Impact: Impacts of affected system compromise on humans

A corner stone to information security but often overlooked and rarely discussed in most organisations. Would the exploit put folks in peril? Certainly, a case-by-case contextual decision.

Mitigation Status: Status of available mitigations, workarounds, or fixes for the vulnerability

Mitigation status measures the degree of difficulty to mitigate the vulnerability in a timely manner. We examine if there is a workable mitigation for the exploit. Certainly, this is contextual and unique to each organisation as everyone is different. Once all this metadata is compiled per vulnerability a decision can be made based on a decision tree documented here: https://www.cisa.gov/sites/default/files/publications/cisa-ssvc-guide%20508c.pdf

This results in a decision to “track”, “track*”, “attend” or “act.” An act being the most proactive (fix the issue ASAP).

Overall, this is a very clever and simple model. But there are challenges when it comes to enterprise/high volume vulnerability management:

We need to bare in mind that risk is contextual, and rightly so. But we don’t always value, store and leverage contextual data enough to become effective:

- Lots of metadata is required to formulate a decision which is possible but difficult to catalogue.

- Lots of this metadata is contextual based on where a vulnerability s discovered. Items such as a systems mission prevalence, public well-being impact and even if the exploit is automatable when kill chains are considered.

- This model is super effective for a company which has the required contextual information at hand and at scale in terms of enterprises. From experience this is more often not the case.

- Effective widescale use of this model would require both DR (Disaster Recovery) metrics, identification of AAA systems/components across the enterprise.

- Simple items such a SBOM (Software Bill of Materials), threat modelling (to discuss mitigations) and a missions critical asset inventory would be required for this to be effective across the enterprise.

- Storing and utilising machine-readable metadata containing both business and technical attributes of end-to-end systems across the organization would be required to make this effective.

Overall, my conclusion circles around the fact that risk is contextual, and we it would be rare to store and provide adequate meta/attribute data to automate SSVC. Automation of frameworks such as SSVC is how we all can win, but it is an uphill battle…