Data breaches often trace back to preventable coding errors: hardcoded credentials in source code, unencrypted data in transit, SQL injection from unsanitised inputs, or API endpoints without authentication checks.

The common factor isn’t developer carelessness. It’s that security controls weren’t built into the development process itself. Security reviews happen after code is written, when fixes are expensive and timelines are compressed.

Protecting sensitive data requires integrating security into the SDLC from initial design through production deployment – not as gates that slow delivery, but as automated guardrails that prevent data exposure vulnerabilities from reaching production.

The Data Exposure Risk in Modern Development

Development velocity has increased dramatically. Teams deploy multiple times daily through CI/CD pipelines. Microservices architectures create dozens of APIs processing sensitive data. Cloud-native applications span multiple services and datastores.

This velocity and complexity creates data exposure risk:

According to Edgescan’s 2025 Vulnerability Statistics Report, SQL injection still accounts for 28.28% of all critical and high severity application vulnerabilities. This despite decades of awareness and readily available parameterised query libraries that eliminate the vulnerability.

14.8% of web application and API vulnerabilities are critical or high severity, many directly enabling unauthorised data access.

Malicious file upload represents 13.56% of critical application vulnerabilities – often enabling remote code execution that provides attackers complete access to backend databases and data stores.

These aren’t sophisticated zero-days. They’re preventable implementation errors that automated security checks catch reliably – if those checks are integrated into development workflows.

Secure Data Storage: Getting the Basics Right

Protecting data at rest starts with fundamental controls:

Encryption for Sensitive Data: Database encryption, file system encryption, and application-level encryption for PII, financial data, health records, and other regulated information. Modern cloud platforms make this straightforward through managed encryption services.

Credential Management: Never store credentials, API keys, or secrets in source code repositories. Use secrets management services (AWS Secrets Manager, Azure Key Vault, HashiCorp Vault) that provide encrypted storage, access controls, and audit logs.

Parameterised Queries: Always use parameterised queries or ORMs (Object-Relational Mappers) for database interactions. Never construct SQL queries through string concatenation with user inputs. This single practice eliminates SQL injection vulnerabilities entirely.

Access Controls: Implement least-privilege database access. Application service accounts should only access the specific databases and tables they require, with permissions limited to necessary operations (read vs. write vs. delete).

Data Classification: Tag sensitive data in datastores so access controls, encryption requirements, and monitoring can be applied appropriately. Not all data carries equal risk.

The challenge isn’t knowing these controls exist. It’s ensuring they’re consistently applied across dozens of services built by multiple teams working in parallel.

Encryption in Transit: Protecting Data Movement

Data moves constantly in modern applications: from users to frontends, between microservices, to third-party APIs, and to data processing pipelines.

Each data movement represents potential exposure:

HTTPS Everywhere: All external-facing endpoints must use TLS. No exceptions for “internal” or “test” environments that may process real data. Certificate management should be automated through services like Let’s Encrypt or cloud provider certificate managers.

Service-to-Service Encryption: Backend microservice communications should use mutual TLS or encrypted service meshes. Don’t assume internal networks are trusted – lateral movement after initial compromise is standard attacker behaviour.

API Authentication: Every API endpoint processing sensitive data requires authentication. Edgescan’s data shows that 20% of critical PTaaS findings involve “unauthenticated access to sensitive resources” – endpoints that expose data without verifying caller identity.

Secure Credential Transmission: API keys and authentication tokens transmitted in headers or request bodies must be encrypted in transit. Never send credentials in URL parameters that may be logged.

Third-Party Integration Security: Data sent to third-party services must use encrypted channels. Validate third-party TLS certificates and maintain approved third-party integration lists.

The operational challenge is maintaining these controls as services evolve. Manual security reviews can’t keep pace with daily deployments.

Automated Security Checks in CI/CD

Integrating automated security checks into CI/CD pipelines catches data exposure vulnerabilities before production deployment:

Static Application Security Testing (SAST): Analyses source code for security vulnerabilities without executing the application. SAST tools identify hardcoded secrets, SQL injection patterns, weak encryption usage, and other implementation flaws.

Secrets Scanning: Automated tools scan code commits for accidentally committed credentials, API keys, and secrets. These should block commits containing secrets and alert security teams.

Dependency Scanning: Third-party libraries and frameworks introduce vulnerabilities. Software Composition Analysis (SCA) tools identify vulnerable dependencies and recommend secure versions. Edgescan’s data shows 40,009 new CVEs were published in 2024 – many affecting common libraries developers use.

Dynamic Application Security Testing (DAST): Tests running applications for vulnerabilities by simulating attacks. DAST identifies SQL injection, authentication bypasses, and other runtime vulnerabilities that SAST might miss.

Infrastructure as Code (IaC) Scanning: Validates security configurations in cloud infrastructure definitions before deployment. Catches misconfigured storage buckets, overly permissive IAM policies, and unencrypted data stores.

The key is making these checks non-blocking for most issues while alerting on critical data exposure risks that require immediate attention:

Critical Severity: Block deployments for hardcoded database credentials, unauthenticated sensitive endpoints, or SQL injection vulnerabilities.

High Severity: Alert security team but allow deployment with remediation tracking for issues like missing rate limits or weak encryption configurations.

Medium/Low Severity: Log findings for routine remediation cycles without blocking deployment velocity.

This balanced approach prevents data exposure vulnerabilities from reaching production while avoiding the “security as deployment blocker” dynamic that leads teams to bypass security controls.

Secure Coding Practices: Making Security the Default Path

Automated checks catch many data exposure vulnerabilities, but some require design-level decisions:

Input Validation: Validate all user inputs against expected formats, lengths, and character sets. Reject invalid input rather than attempting sanitisation which often fails. This prevents SQL injection, command injection, and other input-based attacks.

Output Encoding: Encode data appropriately for its output context (HTML, JavaScript, SQL, etc.). This prevents cross-site scripting and injection attacks.

Authentication and Authorisation: Implement authentication on all sensitive endpoints. Verify not just that users are authenticated but that they’re authorised to access specific resources. Edgescan’s data shows horizontal privilege escalation (accessing other users’ data) is a common data exposure vulnerability.

Error Handling: Don’t expose sensitive information in error messages. Database errors, stack traces, and debugging information often leak file paths, database schema, or internal system details attackers leverage.

Logging Sensitive Data: Never log PII, credentials, financial data, or other sensitive information. Log authentication attempts and authorisation decisions for security monitoring without capturing the actual sensitive data.

Rate Limiting: Implement rate limiting on all data access APIs to prevent automated data scraping and brute-force attacks.

The challenge is making secure coding practices the easiest path. Security frameworks, secure code templates, and reusable authentication libraries help teams build securely by default rather than requiring security expertise for every implementation decision.

Business Logic: What Automated Checks Miss

Automated security checks reliably catch technical vulnerabilities. They completely miss business logic flaws that enable data exposure.

Business logic vulnerabilities exploit how applications are designed to work. Examples include:

Authorisation Bypass: Skipping workflow steps to access data without proper authentication checks. For instance, accessing profile endpoints directly without completing login verification.

Parameter Tampering: Manipulating user IDs, account numbers, or object references in API calls to access other users’ data. The technical implementation may be correct (parameterised queries, proper encoding), but the business logic fails to verify the requesting user should access the requested resource.

Workflow Manipulation: Exploiting state management flaws to skip payment steps while still receiving goods, or accessing data that should only be available after completing specific approval workflows.

Export Abuse: Using legitimate data export features to exfiltrate more data than intended by combining multiple exports or exploiting missing pagination controls.

Edgescan’s 2025 report shows business logic vulnerabilities account for 11% of critical findings discovered through expert penetration testing. These remain completely invisible to automated SAST and DAST tools because the application functions “as coded” – there’s no technical flaw to detect.

Protecting against business logic data exposure requires:

Threat Modeling: During design phase, explicitly map data flows and identify where business logic assumptions could be exploited to access unauthorised data.

Expert Security Reviews: CREST and OSCP-certified penetration testers specifically target business logic flaws through manual testing that considers both intended and adversarial use patterns.

Design Documentation: Document authorisation logic, workflow dependencies, and data access assumptions so security reviewers can validate implementation matches intent.

Remediation: The Critical Missing Piece

Finding data exposure vulnerabilities in development is necessary but insufficient. They must be remediated.

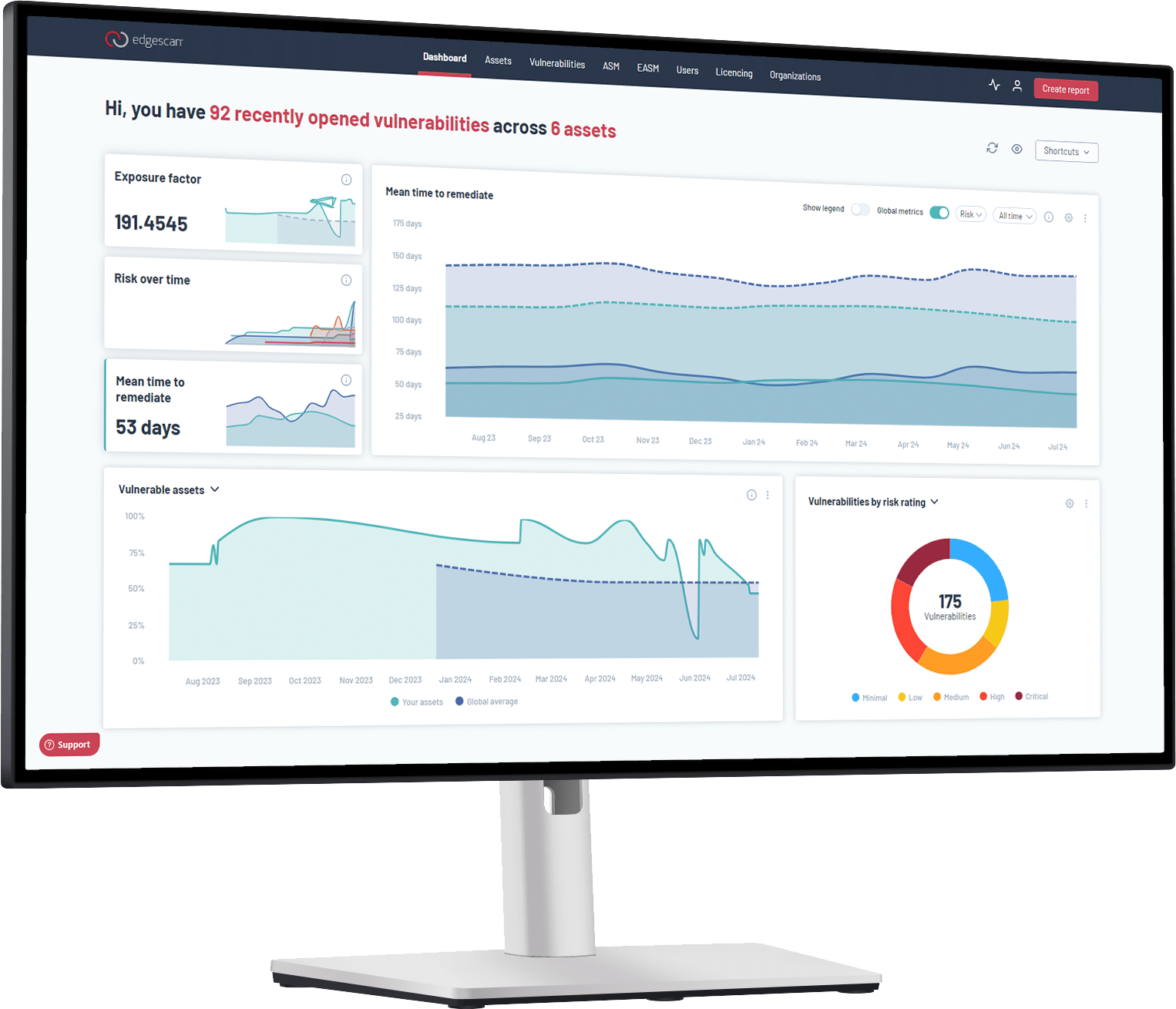

Edgescan’s data reveals concerning timelines:

74.3 days average MTTR for critical application vulnerabilities. Even after discovery, data exposure vulnerabilities persist for over two months on average.

45.4% of enterprise vulnerabilities remain unresolved after 12 months. For large organisations, nearly half the vulnerability backlog never gets fixed.

For development teams, improving these metrics requires:

Clear Ownership: Every vulnerability finding needs an assigned developer responsible for remediation with clear deadlines.

Remediation Guidance: Security tools should provide specific fix guidance, not just identify problems. For SQL injection findings, show the vulnerable code and provide the correct parameterised query implementation.

Validation: After fixes are deployed, automated rescanning should confirm vulnerabilities are actually resolved. Edgescan provides unlimited retesting as part of PTaaS.

Metrics: Track fix velocity by severity level and vulnerability type to identify where remediation processes need improvement or where developers need additional training.

Integration: Security findings integrated directly into ticketing systems developers already use (Jira, GitHub Issues) rather than separate security portals that create workflow friction.

The goal is making security fixes part of normal development workflow, not a separate security debt backlog that grows perpetually.

Practical Implementation Steps

Building data protection into the SDLC requires specific capabilities:

Integrate SAST/DAST into CI/CD: Make security scans automatic parts of build and deployment pipelines, not manual pre-release exercises.

Implement Secrets Management: Remove hardcoded credentials from source code and migrate to secrets management services with automated rotation.

Deploy API Security Testing: Complement general DAST with API-specific security testing that validates authentication, authorisation, and rate limiting on all endpoints processing sensitive data.

Establish Security Champions: Designate experienced developers in each team as security champions who receive additional security training and serve as first-line security resources.

Create Secure Code Templates: Provide reusable templates and libraries for common patterns like authentication, database access, and encryption so secure implementation is the easiest path.

Schedule Expert Reviews: For critical applications processing sensitive data, supplement automated checks with expert penetration testing focused on business logic flaws automated tools miss.

Measure and Improve: Track metrics like time-to-fix by severity, vulnerability types by component, and false positive rates to identify where security integration needs improvement.

Making Security Automatic

Data protection in modern development isn’t about security gates that slow velocity. It’s about automated guardrails integrated into CI/CD pipelines that catch data exposure vulnerabilities before production deployment.

The technical controls exist: secrets management, parameterised queries, encryption libraries, automated security scanning. The operational challenge is making these controls automatic parts of development workflow rather than manual security reviews that happen too late.

Development teams that integrate security from initial design through production deployment – with automated checks for technical vulnerabilities and expert reviews for business logic flaws – ship secure code at velocity. Those treating security as a separate phase ship data exposure vulnerabilities that persist for months after discovery.

The breach cost data makes the business case clear. The automation technology makes implementation practical. The question is whether your organisation builds data protection into the SDLC before attackers exploit preventable vulnerabilities.

Ready to integrate continuous security into your development workflow? Start here.