Automation has earned its place in modern security. Continuous vulnerability scanning, DAST, and configuration checks provide scale and speed that manual testing cannot match. But equating automated “penetration testing” with real penetration testing is a category error with material risk.

Scanners discover known issues at machine speed. Expert testers uncover unknown attack paths at adversary speed. The difference shows up in your coverage, your exposure, and ultimately your profit and loss statement.

Scanners vs. Penetration Tests: Different Instruments, Different Outcomes

The distinction isn’t semantic. Vulnerability scanning and penetration testing serve different purposes.

Scanners compare assets against databases of known flaws (CVEs), misconfigurations, and compliance checks. They excel at finding technical vulnerabilities that match known patterns. According to Edgescan’s 2025 Vulnerability Statistics Report, SQL injection accounts for 28.28% of critical and high severity application vulnerabilities – exactly the type of issue automated scanning handles efficiently.

Penetration testing simulates real adversaries. Expert testers validate exploitability, chain weaknesses across systems, and demonstrate business impact. They don’t just report that a vulnerability exists – they prove how attackers would actually exploit it.

That distinction matters most where scanners are weakest: business logic flaws, privilege escalation paths, and chained exploits across applications, APIs, cloud roles, and identity systems.

OWASP explicitly states that business logic vulnerabilities – abuses of legitimate functionality arising from flawed workflows or assumptions – “cannot be detected by a vulnerability scanner” and demand human-led testing. PortSwigger’s research confirms these logic flaws are invisible to routine automated use but exploitable through unintended interactions.

Attackers take the human route. The MITRE ATT&CK framework documents lateral movement and multi-stage chaining as standard practice: pivoting through systems, harvesting credentials, exploiting remote services, and abusing legitimate tools. No scanner can emulate that adaptive path.

Real-World Examples: What Scanners Miss

Capital One (2019): A misconfigured Web Application Firewall enabled SSRF access to cloud metadata, yielding temporary credentials and S3 data exfiltration. This attack blended configuration issues, identity exploitation, and cloud logic. The incident produced regulatory penalties and settlements totalling $80 million from the OCC alone – well beyond any testing cost savings.

Uber (2022): The attacker used MFA fatigue (push bombing) and social engineering to gain initial access, then located hardcoded credentials and moved laterally. This wasn’t a CVE hunt – it was an adversary exploiting human factors and logic gaps in identity and privilege processes.

API and Business Logic Cases: The USPS Informed Delivery API allowed account data exposure due to broken access controls. Other documented incidents include coupon abuse, gift card manipulation via workflow logic errors, and missing server-side validation – issues scanners typically flag as “informational” if they detect them at all.

These incidents share a consistent theme: complex, contextual, and cross-domain vulnerabilities are strategy problems, not signature problems. Automated tools, even sophisticated ones, cannot understand business rules, trust boundaries, or ask “what shouldn’t be possible here?” – questions that expert testers answer routinely.

Coverage Blind Spots: Why “Automated Pen Testing” Is Lipstick on a Pig

Vendors now market “automated penetration testing” – enhanced scanning dressed in adversarial testing vocabulary. The outcome is false assurance: impressive dashboards, extensive findings lists, but limited ability to validate exploit chains, prove business impact, or uncover logic abuse.

OWASP’s testing guidance explicitly cautions that detecting business logic flaws “is not possible” with scanners and remains a manual discipline requiring knowledge of business processes.

Executives should be wary of any offering that:

Never attempts manual exploitation or scenario chaining: That’s scanning, not penetration testing. The difference isn’t academic – it’s operational.

Reports findings only in CVSS terms without business impact: That’s vulnerability inventory, not threat simulation. A 9.8 CVSS score means nothing if the vulnerability isn’t actually exploitable in your environment.

Ignores identity paths, misaligned privileges, and lateral movement: That’s where adversaries operate. Modern attacks chain identity exploitation, privilege escalation, and lateral movement – capabilities scanners cannot emulate.

Calling scanning “pen testing” is lipstick on a pig. You still have the pig – blind spots in workflows, identity systems, and chained exposures – just wearing prettier reports.

Cost vs. Value: The Executive Ledger

Saving £3,000 to £10,000 by choosing automation-only assessment over expert-led penetration testing looks attractive on budget lines. But security economics depend on expected loss, not invoice totals.

Consider the benchmarks:

Average global breach cost (2024): $4.88 million, up 10% year-over-year. IBM’s research shows extensive use of security AI and automation reduced breach costs by approximately $2.2 million on average – evidence that the right automation pays dividends when paired with expert strategy.

U.S. breach costs (2025): Trending even higher at $10.22 million average, with detection, escalation labour, and regulatory penalties as primary cost drivers.

Healthcare breaches: Regularly exceed $7 million to $10 million in total costs, factoring regulatory fines, remediation, customer notification, and reputation damage.

Compare this exposure to the cost difference between scanner-only testing and comprehensive programmes blending automation with expert penetration testing. Even if expert-led engagement costs £25,000 to £60,000, is that material compared to a single logic flaw incident driving multi-million-pound losses, regulatory fines, class actions, and customer attrition?

Executive calculation:

- Automation-only savings: £5,000 to £20,000 per cycle

- Expected downside of a missed logic flaw: $4 million to $10 million+ in direct and indirect costs, plus board time, customer attrition, and opportunity cost

That’s negative ROI.

Edgescan’s 2025 data adds context: organisations average 74.3 days to remediate critical application vulnerabilities. Large enterprises leave 45.4% of discovered vulnerabilities unresolved after 12 months. Part of this backlog problem stems from false positives and poorly prioritised findings. But another part comes from missing the high-risk vulnerabilities that automation cannot detect – business logic flaws that remain undiscovered until exploitation.

What Real Security Looks Like: Technology + Expertise

The winning model combines technology efficiency with human expertise:

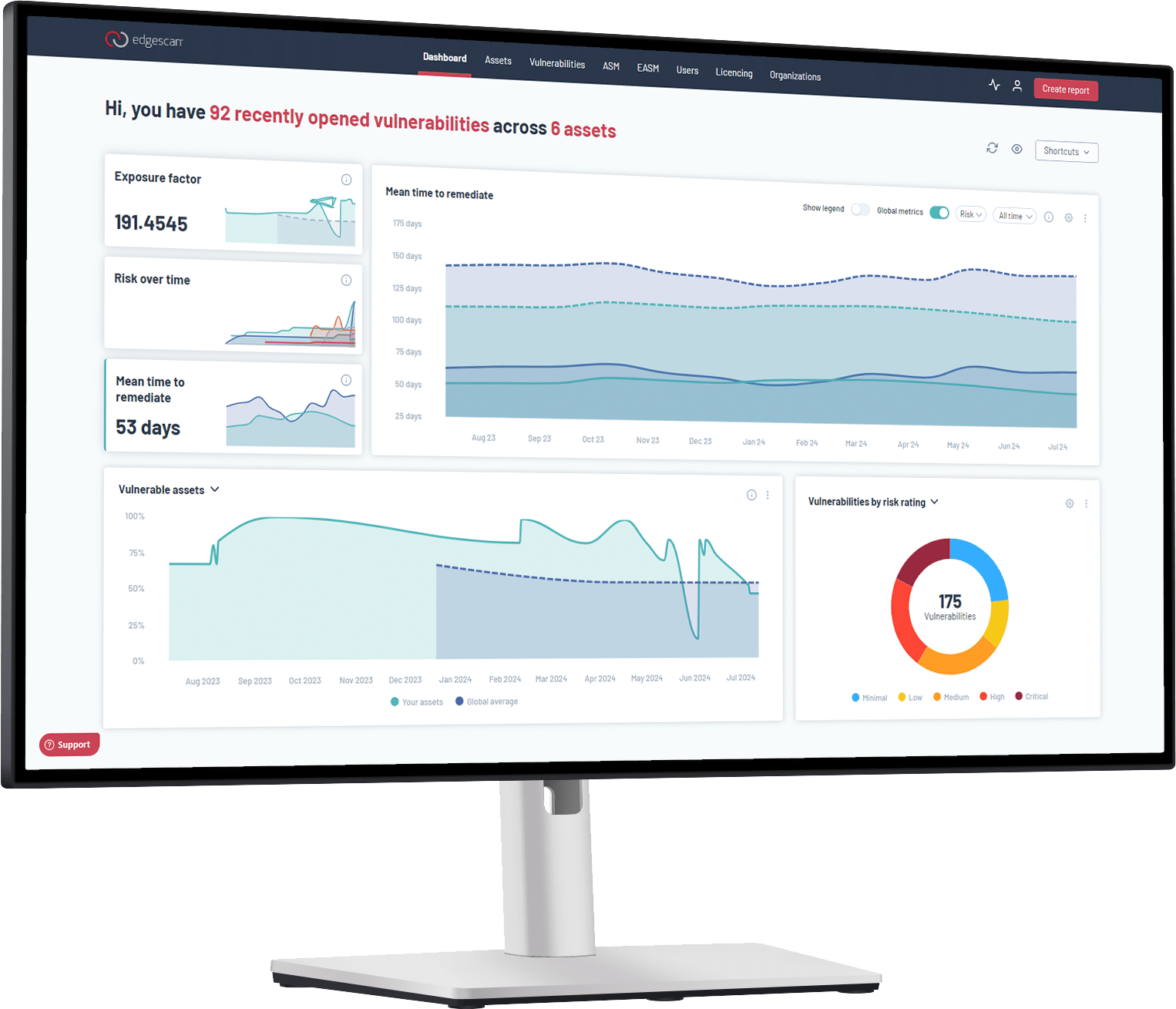

Continuous Scanning for Breadth: Use authenticated scanning to keep pace with patchable, known issues. Meet compliance requirements (PCI DSS, ISO 27001) and reduce mean time to remediation. Edgescan’s platform delivers unlimited DAST as part of PTaaS – continuous assessment that establishes baseline security hygiene.

Expert Penetration Testing for Depth: Scope critical business processes, simulate adversarial chaining, validate exploitability, and quantify business impact. PCI DSS v4.0 explicitly distinguishes and requires methodical penetration testing covering both network and application layers – not just vulnerability scanning.

Business Logic Testing: Model workflows, rate limits, and trust boundaries. Test step-skipping, negative-value inputs, server-side validation bypasses, and API authorisation paths that scanners miss entirely. Edgescan’s data shows business logic vulnerabilities account for 11% of critical findings discovered through expert testing – vulnerabilities automated tools never detect.

Attack Chain Validation: Build scenarios around identity compromise, MFA resistance testing, lateral movement, and cloud role abuse following MITRE ATT&CK frameworks. Prove how individual vulnerabilities combine into successful breaches.

Validated Intelligence: Use hybrid validation – automation for volume, expert analysis for accuracy. Edgescan’s 2025 report shows 92% of vulnerabilities validate through automated analysis, while 8% require expert human review by CREST and OSCP-certified analysts. This eliminates false positives while catching complex threats.

Practical Guidance for Leaders

Treat scanners as hygiene, not assurance: Quarterly scans establish baseline security but don’t validate exploit chains or business impact. They’re necessary but insufficient.

Fund at least annual expert-led penetration testing: Focus on highest-value workflows and identity paths, particularly after significant changes. Tie findings to risk registers with loss scenarios – revenue at risk, regulatory exposure, downtime costs.

Demand evidence of exploitability: “High CVSS” without demonstrated attack path is noise. Require testers to prove how vulnerabilities actually compromise your business.

Integrate findings into engineering: Fix logic flaws with server-side controls, rate limits, and least-privilege access. Prevent SSRF and metadata access. Remove hardcoded secrets. Instrument anomaly detection for identity misuse.

Measure value, not just cost: Use breach cost benchmarks to quantify avoided loss when expert testing and validated intelligence close critical gaps before exploitation.

Moving Forward

Automated tools are indispensable for modern security programmes. But automated “penetration testing” remains scanning – fast, useful, but fundamentally limited.

It leaves blind spots where modern attackers thrive: business logic exploitation, identity abuse, lateral movement, and attack chains that defy signature-based detection. Attackers think creatively, socially, and strategically. They only need one overlooked path.

Short-term savings on automation-only testing look attractive on budget lines. But breach cost mathematics make the calculation clear: comprehensive security requires technology efficiency combined with expert human testing that discovers and mitigates exposures at adversary speed.

Anything less is, frankly, lipstick on a pig – and a risk your balance sheet shouldn’t carry.

Ready to move beyond automated scanning to validated security testing? Start here.